Secure, Governed, and Verifiable AI Agents Validated Through Courtroom Simulation

Introduction

Google’s Agent Development Kit (ADK) provides a well-organized framework for building, testing, and deploying AI agents. While ADK performs admirably in research and prototyping settings, it does not include the enterprise-grade controls that production environments demand—identity-bound execution, cryptographic provenance, runtime policy enforcement, and tamper-resistant audit trails. This paper presents SecureADK, an extension of ADK that puts security at the forefront by integrating zero-trust runtime enforcement, dataset sealing through OmniSeal, and ledger-backed provenance powered by Hyperledger. We showcase these enhancements through a courtroom orchestration use case, contrasting simulations driven by ADK alone with those running on SecureADK. The outcomes demonstrate that although ADK can support functional collaboration among agents, only SecureADK delivers verifiable, auditable, and regulator-ready decision systems suitable for judicial, healthcare, financial, critical-infrastructure, law-enforcement, and defense applications.

AI agents are taking on growing responsibilities across many domains, including legal reasoning, clinical decision support, financial automation, and regulatory reporting. Systems operating in such contexts must satisfy rigorous demands: deterministic reproducibility, identity attribution, evidence integrity, non-repudiation, policy governance, and forensic traceability. Standard ADK orchestration does not provide these assurances natively. SecureADK closes this gap by weaving security, governance, and provenance directly into the fabric of the agent runtime.

Courtroom Orchestration as a Stress-Test Scenario

Simulating a courtroom creates a high-stakes, multi-agent, adversarial reasoning environment, making it an ideal proving ground for trust requirements. The agents typically engaged in such a simulation include the judge, prosecution lawyer, defense lawyer, medical expert, jurors, clerk, and evidence processor. They are tasked with exchanging evidence, conducting reasoned debates, accessing documents, reaching decisions, and producing auditable verdicts. This arrangement closely parallels the demands placed on regulated enterprise AI systems.

Courtroom with ADK Alone

Architecture and Flow

In a typical ADK courtroom simulation, the user kicks off the trial, agents trade prompts, tools are invoked directly, evaluators score the outputs, and a verdict is issued.

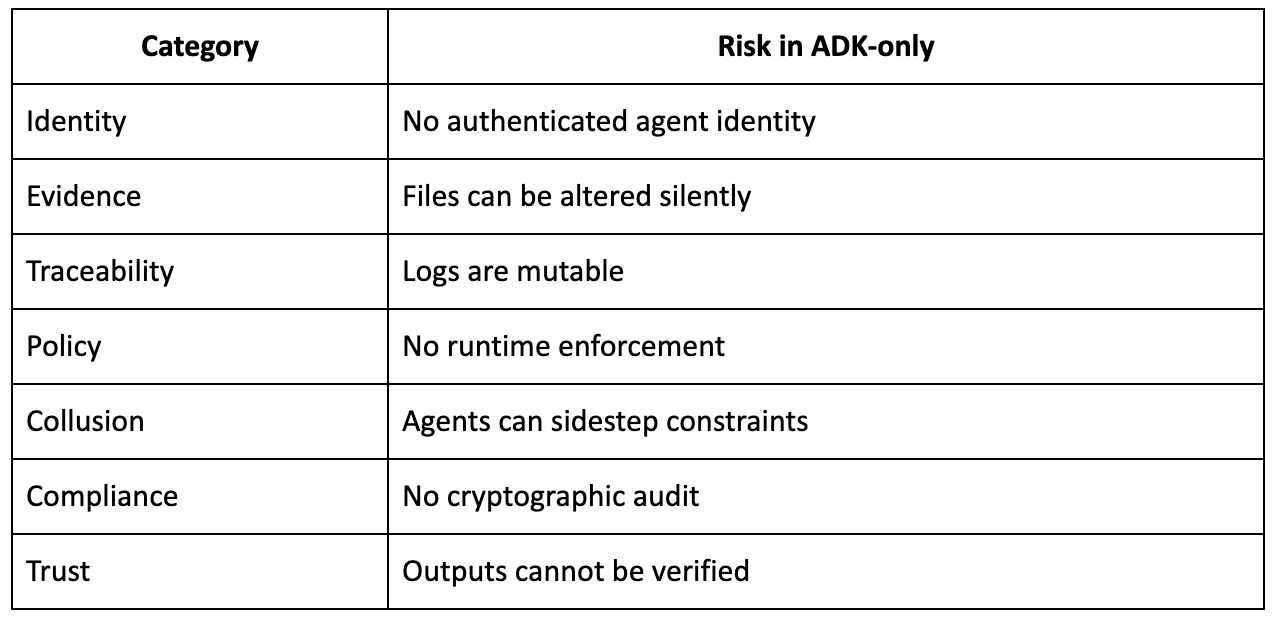

Limitations

Example Failure Modes

- The defense agent tampers with evidence without being detected.

- The medical agent draws on an unverified dataset.

- The juror’s reasoning process cannot be reproduced.

- Tool calls run without appropriate authorization.

- The resulting verdict cannot be audited.

Therefore, while the ADK-only configuration may be acceptable for demonstration purposes, it is unfit for genuine court or regulatory applications.

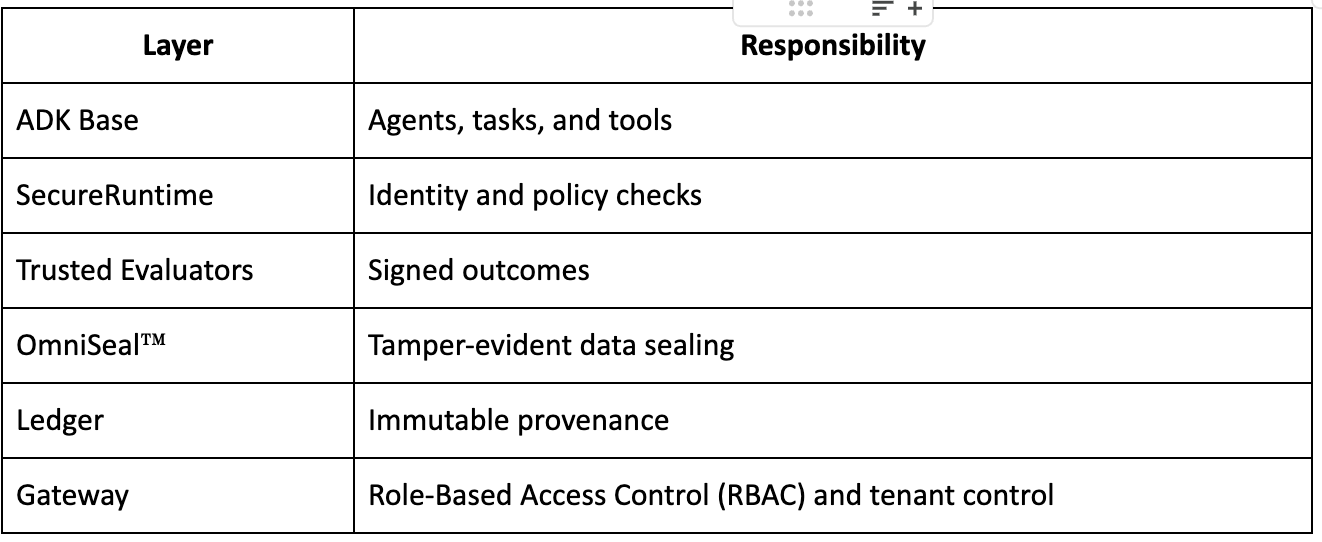

SecureADK Architecture

Layered Security Stack

SecureADK is built on a thorough, layered security architecture:

Courtroom with SecureADK

Secure Flow

- Each agent receives a cryptographic identity.

- Evidence is sealed via OmniSeal™.

- Tool calls proceed only after policy approval.

- Evaluations are cryptographically signed.

- Every interaction is committed to the ledger.

- The verdict is sealed and fully reproducible.

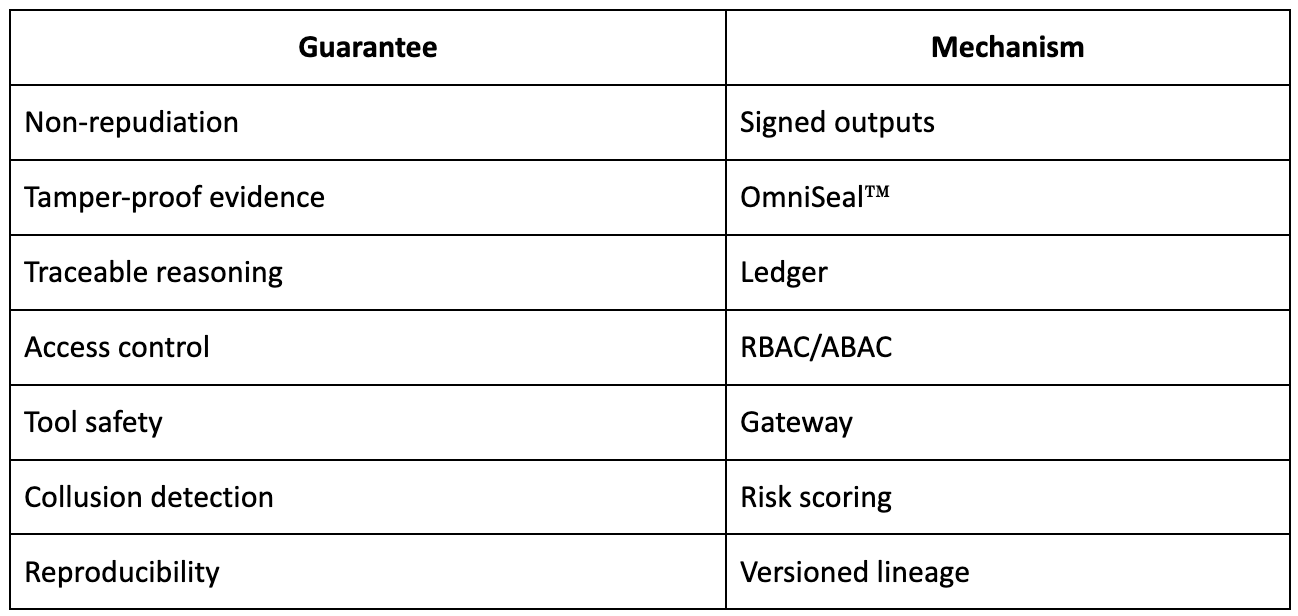

Security Guarantees

Example Secure Trial

- Evidence Handling: Evidence is uploaded, sealed, hashed, and accompanied by a matching ledger entry.

- Prosecution Access: The agent’s identity is authenticated, policy compliance is validated, and permissions are confined to read-only access.

- Medical Expert: The dataset version is certified, and the evaluation is signed.

- Verdict: The verdict is signed by the judge agent, linked to every relevant input, and remains auditable.

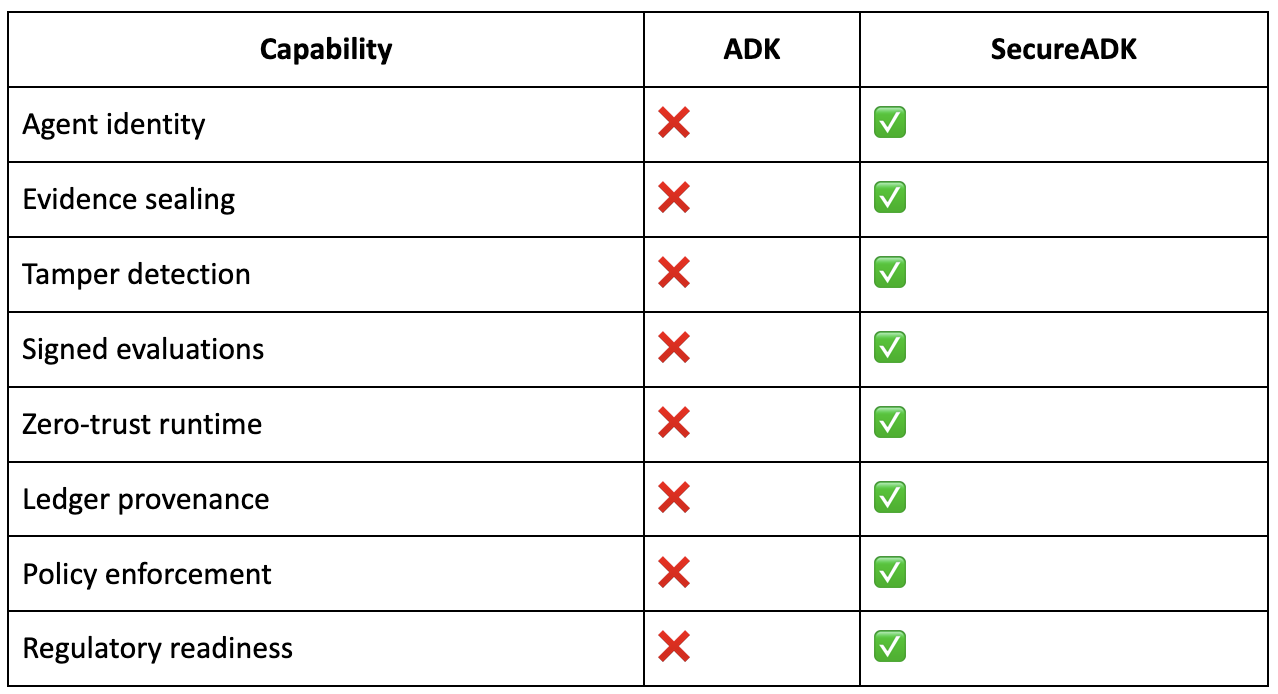

Comparative Analysis

The table below summarises how the capabilities of ADK and SecureADK compare:

Formal Properties

SecureADK contributes several formal properties to the orchestration environment:

- Integrity: Every artifact is cryptographically sealed.

- Accountability: Every action is bound to a specific identity.

- Determinism: Decision graphs can be replayed.

- Governance: Policy-as-code is enforced.

- Auditability: An immutable provenance ledger guarantees transparency.

- Isolation: Tenant and sandbox separation is preserved.

Broader Implications

- Legal Systems: SecureADK underpins evidence admissibility and reproducible verdicts.

- Healthcare: Enables HIPAA-compliant AI reasoning.

- Finance: Supports auditable trading agents.

- Defense: Establishes trusted command chains.

With SecureADK, an existing multi-agent ADK courtroom stack evolves from simulation-grade into forensic-grade, regulator-ready infrastructure.

Closing Remarks

SecureADK acts as a security and governance layer built atop ADK. While ADK supplies the foundational orchestration framework for AI agents, it does not deliver the trust, compliance, and audit capabilities required in enterprise or regulated settings. SecureADK extends those capabilities by introducing data sealing, signed reasoning, enforced identity, comprehensive provenance logging, and regulatory compliance. Both layers are indispensable: ADK provides the core intelligence and operational backbone, while SecureADK ensures those operations remain trustworthy, compliant, and auditable—making the combined system fit for high-stakes, production-grade AI deployments.

About PureCipher Inc.

PureCipher is a leader in AI security and data integrity, dedicated to safeguarding national interests through advanced, quantum-resilient technologies. Its Artificial Immune System™ platform features OmniSeal™—a patent-pending tamper-evident technology—along with Noise-Based Communication for stealth transmission, Fully Homomorphic Encryption (FHE)–enabled AI processing, and secure, transparent AI agents. By drawing on deep expertise in AI, quantum computing, and cybersecurity, PureCipher™ pursues its goal of building a safer and more trustworthy world.

Contact: PureCipher™ Communications

Email: media@purecipher.com

Website: www.purecipher.com